This is something I’ve been spending a lot of time working on with the completion of my Reader Top 30, and I feel like I have things where I want them, so I wanted to make another detailed post talking about my new creation, the SONAR score. If you’ve been around for a while, you read my introduction pieces here and here back in November. Like any good social scientist, I set out with a goal; to try and figure out a way to evaluate minor league prospects across all leagues, factoring in all of the aspects of the game that can be accurately measured, to try and evaluate what prospects have done, and which players might succeed/struggle going forward based on their peripherals. Along the way, I encountered many difficulties, including the simple amount of work it entails to code over 5,000 players into a system, and then trying to figure out if the formulas I used were accurate, helpful, or off the mark. My test run was published in November, and then I started to do position by position breakdowns. It was during this process, when I was looking at the numbers in depth (and based on reader feedback) that I discovered some of the flaws in the system, and I set about fixing the errors, making adjustments, and trying to make the system the best it can be. As any person who tries to create something new, the first run (or first 10 in this case) rarely is ever perfect. But a good scholar always tries to improve, to figure out what is missing, and to try and make it the best it can be. So that is where we are now. Check below for the details.

I’m not going to re-hash all of the details from the introduction pieces linked above. Most of the tenets of the study are still the same. The goal is to try and place all prospects on an even line, that is, adjusting their raw batting lines/pitching lines for the league they play in, factoring in their age related to level, the size of the sample, factoring the impact of their home park, and then drilling right down to their core peripherals that indicate true levels of skill, removing as much of the luck as possible. As I’ve openly stated from the beginning of this project, the end product, the SONAR score, is not meant to replace a scouting report, or be the sole measure by which you judge a prospect. Far from it. The SONAR score is meant to be another piece of data, either a confirmation point or a jumping off point. If you read about Prospect X and scouts saying he’s one of the best prospects in baseball, I want to be able to look at his score and see if his performance has backed up the claims from scouts. On the other side, if I see a player with a very high score, I want to investigate him more, try to figure out why he’s been successful, and compare his numbers against his scouting reports. If you look at the prospect world, different outlets assign different weights to their evaluations of prospects. Some evaluators will rank a guy with a terrible batting line in their Top 50, based on the player’s raw tools and abilities, even if he hasn’t hit a lick or retired many batters as a pro. On the other side, some people weigh performance more heavily, and will rank players who’ve reached the upper minors higher than players further away from the majors but with possibly more upside. The best evaluators take all of the information they have, they blend it together, and they come up with a ranking/evaluation of a player. But even the best guys miss on prospects every year, sometimes they miss badly. Its the nature of the prospect game, where a guy can go from a .220 hitter with seemingly no skills to a bona-fide stud prospect in a matter of a month. Players develop at different speeds, some guys break out at a very young age and never look back. Some break out much later in their careers, for whatever reason. Some guys develop new skills, they radically change their swing/delivery, or add a new pitch, or some kind of twist that alters their development. And really, some guys just never break out, or never perform up to expectations. The game of baseball is really hard, some guys with seemingly endless potential simply never make it. Because of that fact, you can look back at years past and see guys ranked in the Top 10 of prospect lists and say “who?”…such is the nature of the beast. SONAR is simply meant to be one more piece of the pie, one more fly in the proverbial ointment, one more piece of data to use in evaluating prospects.

So, a quick summary of what SONAR tries to do

* Adjust for league/level – How does a line in the California League compare to a line in the Florida State League?

* Adjust for home park – Hitters playing half their games in pitchers parks deserve to have their numbers adjusted

* Adjust for age – A .300/.400/.500 line for a 22 year old in A ball is different than a .300/.400/.500 line for a 19 year old in A ball

* Measure the core components – Getting on base, hitting for power, and using your speed. For pitchers, its the three true outcomes; strike outs, walks, and home runs.

And now, the changes I’ve made from the initial release

* Changes to core components – I’ve figured out a way to better evaluate the core components for a hitter, getting on base and raw power. I don’t want to get into specifics on the formula, but I was discovering quite a few guys with noisy numbers based on flukish BABIP results in small sample sizes, so I found a way to weed most of these out. I was also able to improve the way power is treated by the system. The result should be a much cleaner approach to the core skills for hitters.

* The addition of speed – One of the biggest things I wanted to try and address was how to include the stolen base into the score. As I highlighted in my original piece, stolen base numbers in the minors are often times a bit misleading. Pitchers have less refined pickoff moves, many minor league catchers do not have major league catching skills, and base runners are still learning how to actually steal bases with a solid technique. Nevertheless, based on feedback received early on, and from looking more at the numbers, I figured out a way to incorporate speed in a way I felt comfortable, and it has drastic changes to some players numbers, but I feel the changes are merited. As you’ll see with a player like Anthony Gose, the change was massive, but his speed represents his best raw tool, and is something that had to be addressed. If you look at a player like Gose and discount his speed, you are taking away most of his present value and future potential, because his game is always going to be predicated on speed. The stolen base itself is a much debated item in the statistically astute community, but players that can steal tons of bases at a high percentage have value, especially if the speed accompanies an otherwise strong skill set.

* Rewarding contact – This kind of ties into the first point about core skills. Before, there was no real adjustment for guys who showed excellent contact skills, and conversely, guys who struck out a ton. I felt this was an important adjustment to make. Guys who strike out at alarmingly high rates in the minors are greater risks, because the pitching doesn’t get any easier as you climb the ladder. On the other side, guys who make tons of contact will always have a chance to hit for average, based on the luck they have on balls in play. Contact rate isn’t weighted as heavily as some of the other core skills, but its an important factor to consider.

* Re-weighted pitching components – I decided to tweak the formula slightly for pitchers, giving a bit more weight to the strikeout. For a hitter, a strikeout is not as harmful as it is helpful for a pitcher. When the ball is put in play, anything can happen (good or bad), but when the batter can’t hit it, there is only one outcome. Guys who show swing and miss stuff in the minors have a better chance of achieving a higher ceiling in the majors. At the same time, control/command is important, as guys who have no idea where its going face a tougher climb, but the ability to make a batter swing and miss is arguably the most important skill a pitcher can have. I also found that guys who showed ridiculously good control in the low minors, but didn’t have the swing and miss stuff, often times were found out at higher levels, and this kind of goes along with the consensus opinion of scouts.

* Altering of the scale to evaluate all prospects – With all of the tweaks to the system, the scale where prospects generally fall has changed, but it applies to both pitchers and hitters. There are 5,598 players ranked in the system. A few of these guys may be eliminated as I go through individual teams and remove players who no longer have rookie eligibility, but you get the idea in terms of the number of guys in the system. This number includes both hitters and pitchers. There were 2,684 position players and 2,914 pitchers. The raw average score, that is, the average of the 5,598 players, is -6.57. That basically means that the “average” player in the SONAR universe has a score below zero. This wasn’t anything I planned, but intuitively it makes sense. Of the 5,600 players I looked at, obviously only a small fraction of them will be stars, a slightly larger subset will go on to become above average big leaguers, a slightly larger subset will be average big leaguers, a larger group will be 4A/up and down guys, and the large majority of them will never make it as even bench players in the majors. Its a numbers game, there are only 750 big league spots available (25 man roster x 30 teams) and then a few more on the 40 man who will shuttle up and down before either becoming a fixture at the big league level or falling off the map. The standard deviation for the entire sample was about 20, which also makes some sense. The difference between the highest score in the system (Jason Heyward 138.20) and the lowest score (Jerry Gil -193.5) was 331.7. The difference between the average prospect (-6.57) and the lowest score was greater than the difference between the average prospect and the highest score. 2,374 players had a score of at least 0.01 or higher, but only 447 players had a score of 14.00 or higher, which is essentially one standard deviation (20.67) away from the average. Going further up, there are a total of 76 prospects with a score of 40.00 or higher, 42 with a score of 50 or higher, and 19 with a score of 70 or higher. So the distribution is not a standard bell curve, it is skewed more toward the below average, but I think its intuitive.

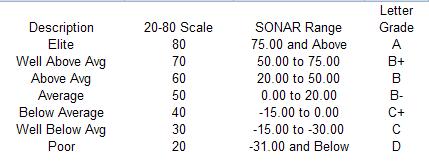

The 20-80 scouting scale is considered the gold standard when discussing the tools of a prospect, so to keep that applicable to this score, I’ve developed a chart for easy reference.

Pretty self-explanatory stuff. Because of the exact nature of the score, you can compare two prospects within the same range and rank them however you choose, but a rough guideline is often times helpful.

As I mentioned a while back, the current version of SONAR will only have 1 year of data, 2009. After the 2010 season, I will again compute a 1 year score, but I’ll also produce a score which blends together the 2009 and 2010 data to come up with a composite type score. Because of the nature of prospects and how rapidly they can change, I think its important to place a strong weighting on the most recent data, but a historical track record is also important and something to definitely consider.

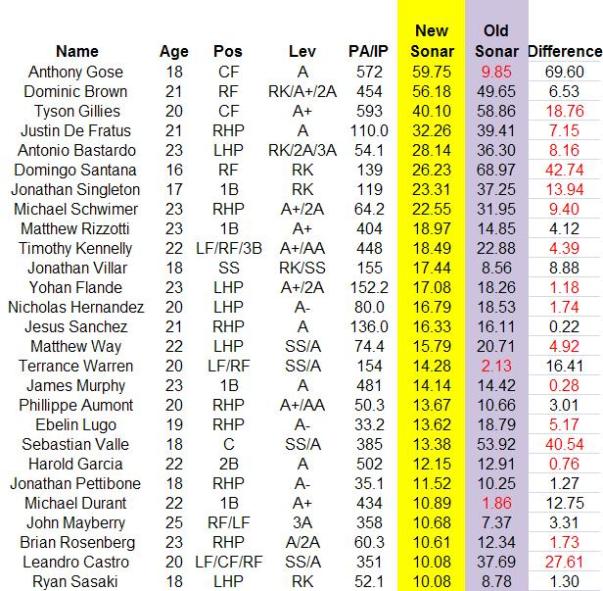

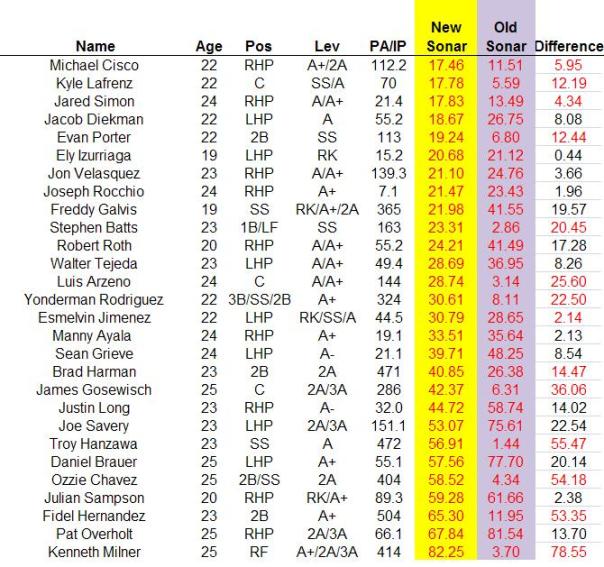

So now that you know about the changes I’ve made, as well as the system in general, I wanted to present a comparison chart for Phillies prospects, and how their scores have changed from the old version to the updated, improved current version. I’ll split the graphic up into a few pieces and break it down part by part.

* As I mentioned above, Anthony Gose was one of the biggest benefactors of the changes made, and its intuitive. His approach at the plate remains raw, but he has a number of things working solidly in his favor. First, he was one of the youngest players in the SAL, which boosts his score. More importantly, his speed ranks at the top of the list among all minor league players. He stole 76 bases at a 79% success rate, which is elite level. Domonic Brown also sees a bump up as a result of his work on the base paths.

* Tyson Gillies actually sees a drop in his score, and part of this has to do with his speed working against him. He managed an impressive 44 stolen bases, but at a 69% success rate, which doesn’t help his score to the level that Gose’s number helps him. Gillies also derived some of his on base average through 18 HBP in 2009, and while part of that is skill, part of it is also somewhat unpredictable, and an adjustment to the formula helps to account for this. His score still puts him comfortably in the above average category.

* The biggest drop on the list is Domingo Santana, and this again goes back to the feedback given by a number of people when discussing the scores. The big area where Santana was impacted was the contact aspect of his skill set, as he posted a very high K rate in his 139 PA. His score still places him in the above average tier, and we all know his upside is substantial, but it also adds in more of a hedge because of the swings and misses, lowering his score a bit. Still, a score of more than 25.00 in a very limited sample is very impressive, its just more in line with what you would expect to see. In the same light, Sebastian Valle sees his score dip significantly, as the emphasis on drawing walks as a core skill hurts his overall profile. I highlighted this in my writeup of him in the Top 30.

* Travis Mattair and Derrick Mitchell receive big bumps here. With Mattair, the emphasis on drawing walks helped boost his score. Mitchell’s surface numbers were bad in 2009, but underneath he showed very nice power in a tough hitter’s league, and he wasn’t obscenely old for Clearwater.

* Quintin Berry gets a bump up, largely on the basis of his excellent speed and stolen base potential, as well as his ability to draw a walk. His score is held down by his age, and his propensity for the strikeout, as well as the lack of power. Aaron Altherr becomes a positive value prospect, as he drew a few walks and also swiped a few bases in his very limited sample.

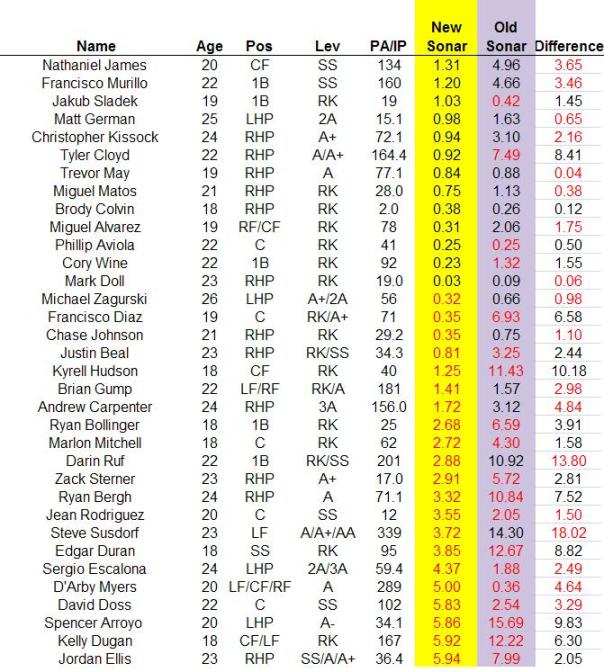

* Tyler Cloyd gains some value here thanks to the tweak which placed a bit more emphasis on home run suppression. Guys who are home run prone in the minors are often times even more home run prone at the major league level, so it is definitely a peripheral to pay close attention to.

* Steve Susdorf sees his stock take a turn down, as he possesses almost no speed, and his walk rate and power numbers were just average for his age compared to his level.

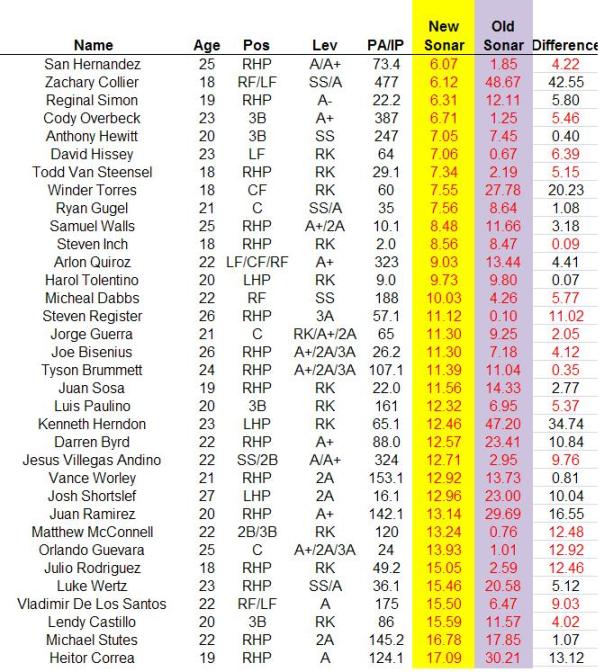

* A bunch of big movers here, including Zach Collier and Winder Torres. Collier, especially, still showed a hint of secondary ability even with his massive struggles in 2009. He stole 20 bags and drew 32 walks, even though his contact rate is really holding his score down. It wasn’t a great year by any means, but not everything was negative.

* Juan Ramirez still shows up on the wrong side of the ledger, but the altered formula likes his performance a bit more. We really won’t have any idea about him until seeing him pitch in a somewhat neutral environment. Julio Rodriguez sees his score drop significantly, as his HR rate is a significant red flag going forward. Still a lot to like there, and a full season (or at least 70+ innings at Williamsport) should give us a better idea.

* Freddy Galvis and Joe Savery see a bump up, but both guys still have a long way to go.

Over the next few days/weeks, I hope to have the SONAR page at the top of the site updated with new spreadsheets for all 30 teams, and then the updated position by position breakdowns. For me, the initial output a few months ago was very important, because it allowed me to have something to look at, and when I went through the results on a more individual basis, it helped me to uncover some of the flaws in the system, and some of the things that needed to be improved. The feedback given originally was also very helpful, and I’m hoping that as the season progresses and we look back at this data, we can figure out ways to even further improve the system. I’m somewhat limited in what I can do, because I only have access to basic data, but I think for what I want SONAR to be (another data point), it can be very useful.

It takes a secure person to admit that something he worked hard on needed major improvements. It really shows good character to publicly admit that the readers influenced some of the changes.

LikeLike

Great work. I almost can’t stop looking at it.

LikeLike

It seems like a red flag to me that there are guys populating the upper reaches of the list like Travis Mattair and Michael Durant who really didn’t even have good 2009s.

LikeLike

I am really impressed with this system. The time and effort that it must take to compile all these statistics and devise this tool is commendable. Thank you for providing fans with this service.

LikeLike

I think this version a bit better. The beta version was a great step though. I think its important to remember that PECOTA & WAR went through several iterations before the data was ever made public…James tossed out a beta version for feedback, took it and produced a far cleaner model this time around. Also, remember BP revises its PECOTA modeling every year based on new information and long-term studies.

LikeLike

I like the 2.0 edition better. It seems to be highlighting guys who had good seasons relative to age.

It appears heavily weighted towards hitters that control the plate (make contact, and especially take walks). That’s why a few of the guys with good triple slash lines are lower. It’s a philosophical thing – not many of those free swinging guys make it unless they change their approach.

Diddo the pitchers. Control is a major component.

If I could suggest one tweak it would be to again adjust the age/performance factor.

Another would be to factor in possition. Guys like Durant, Rizzoti, and Murphy have high Sonar scores because they had statistically decent/good seasons for baseball players. However, the numbers weren’t so good coming from the 1B possition. We would all be very excited about these guys if they had a SS, 2B, or C designation next to their names instead of 1B. Maybe something to consider for version 3.0?

LikeLike

The positional aspect is something I considered, but its tough to figure out how to adjust it properly. A shortstop is definitely more valuable than, say, a left fielder, but if the shortstop is terrible defensively and a shortstop in name only, then he’s going to get extra credit for something he doesn’t deserve, if that makes sense. Its the same reason that I don’t include any defensive adjustment in the score, simply because I don’t think there is a good way to do it, because defensive statistics at the minor league level are both very crude and almost worthless. But its definitely something I’ll try and look at heading into next year.

LikeLike

I agree with the decision to not use positional adjustments in the assessment. Thats something we should be capable of doing on our own by simple reasoning. We’re all quite familiar with the C-SS-CF-2B-3B-RF-LF-1B value scale…I got that right, didn’t I? I’ve seen some flip CF and 2B but I’d have to think CF is tougher than 2B (it should be noted that WAR adjusts the same for CF, 2B & 3B as well as the same for LF & RF which is a tad asinine).

LikeLike

Version 2.0 seems to make a bit more sense based upon the numbers, although I was a big fan of Version 1.0 as well. Congrats on all of the hard work and seeing something that you liked.

One thing I was surprised about was that Hewitt’s numbers didn’t change much from 1.0 to 2.0. I thought for sure with the added emphasis on contact he would take a big hit. It gives me a bit more hope that he has a chance.

LikeLike

I am impressed with all the work that has been put into this.

But it seems like there is an easy way to test SONAR 2.0 that does not require waiting several years to see how these guys turn out.

Why not go back 10 or so years and run the numbers for Philllies’ minor leaguers over a several year period. If the system has validity we should, on average, see high scores for those who made it and low scores for those who did not. And, presumably, it should highlight the reasons why the busts were busts and the surprises rose from obscurity. Moreover, we should see some interesting patterns distinguishing flash in the pans from those who grew into their bodies, or ballplayers vs. toolsheds, etc.

Of course, I do not have to do the work…

LikeLike

The problem with backtesting is the park adjustment. Park adjustment is an important factor in the equation, and I simply don’t have access to the old park factors. Not to mention all the calculations are done in EXCEL, which requires me to go and get all the numbers by hand.

If someone were to find the three year weighted park factors (that is important, 3 year data is necessary) for past years, like 1999-2001, 2000-2002, etc etc, then I could do it, but it would take time.

LikeLike

Park adjustments, is that like level adjustments, trying to understand. Gilllies I heard benefit from his speed at lower level, scouts said some of his infield hits would be outs in higher level, is that taking into account, sorry just trying to figure out this system.

LikeLike

Mikemike, park adjustments are somewhat like level adjustments in that they adjust for the environment in which the athletes play. Ballparks can vary greatly within leagues. Trenton for example in the Eastern League plays as an extreme pitchers’ park due to its location directly on the Delaware River.

LikeLike

Right. Lakewood is a notorious pitcher’s park, whereas Reading is more of a hitter’s park. Various leagues are known to be more offense oriented (Cal League, Pacific Coast League), and pitching oriented (New York Penn League, Florida State League), but within those leagues there are some parks that play at polar opposites to the league norm, and that has to be accounted for in a player’s evaluation.

LikeLike

Back in the 1970s and before, Reading used to be known as a hitters park. Now it’s a pitcher’s park. I wonder what happened.

LikeLike

Intuitively, these results seem quite a bit better than the original product. Good job identifying characteristics that were skewing data. I’m impressed.

As to the positional aspect, rather than weighting position in the score, it could be rated in scale. I don’t know exactly how the numbers shake out, but I’d imagine that a SONAR 20 SS would be a better prospect than a SONAR 25 1B. This way particular players do not get upgraded or downgraded for a position they may not be playing, but there’s still some basis of comparison.

LikeLike

Reading is still a slight hitters’ park, and an extremely good home run park. Parks can change though, as changes in seat orientation (eliminating foul ground for instance), changes in outside dimensions, and changes that result in different wind configurations can have an effect. Sometimes the entire league changes parks to the point where an unusual park is no longer unusual.

Fenway Park illustrates the point. In the teens, it was EXTREMELY difficult to pop a home run in Fenway Park. Babe Ruth hit 38 of his 49 Red Sox home runs on the road, and it was into the ’30s when the team finally topped 50 home runs in a season. Later as the Monster was built it became the most extreme hitters’ park in the American League. Now it’s closer to neutral as other new ballparks match its dimensions in some fashion.

LikeLike

Almost forgot to add this link. The data is a couple years old, but it gives you an idea of the park factors around the minors.

http://www.baseballthinkfactory.org/files/oracle/discussion/2008_minor_league_park_multipliers/

LikeLike

True, and PP did do the pieces were he sorted the data by possition which gives a good feel for where the guys shake out relative to their positional peers.

Biggest surprises? Derrick Mitchell and Trevor May for me.

LikeLike

Could you give an explanation on why Trevor May (one of our top pitching prospects) doesn’t have a higher SONAR score?

LikeLike

My guess to the Trevor May question would be due to his high walk rate…

LikeLike

On May

1. The walk rate is a big red flag.

2. Lakewood is a very pitcher friendly park, which brings his score down

3. He threw only 77 innings, smaller sample means his score will be lower.

Basically, his K rate while outstanding doesn’t do much more than offset the walk rate. 5.0 BB/9 is a very high number.

Obviously from a scouting perspective (and my personal opinion) he’s one of our best prospects. But he does carry risk because of his lack of control. And while he did a nice job of keeping the ball in the park, his GB rate was only 36.6% last year, which is a worry. It might not hurt him quite as much in the FSL in 2010, because its a heavy pitchers league, but future home run problems coupled with lots of walks is a bad recipe for a pitcher.

LikeLike

“The walk rate is a big red flag.”

Or it is an area a pitcher might more easily improve. A red flag in an area that is beyond normal improvement might scare me more. Plus high walk rate in an older player should carry more weight.

LikeLike

That seems like classic tools vs development. May needs great natural ability to get the Ks and presumably can work to reduce the Ws. I like this version of SONAR better than the first, but can’t see the validity of Rizzotti and Durant as prospects. Perhaps it is the position thing. This may be a case where the incorporation of defensive ability from the scouting results would allow including a factor for position and defensive skill. I suspect Phuturephillies will need to do significantly more tweaking. When your own top 30 differs so drastically from what your scores are telling you, it says that in the end you still trust other evaluations a lot more than SONAR in its release 2.0

LikeLike

There’s a guy I liked for a couple of years who jumped on version 2. Unfortunately, I’ve been told that TJ Warren was released.

LikeLike

Yeah I left him on the list just as a reference.

I think its important to understand the context of what the score entails. Its looking at core abilities, ie, getting on base, hitting for power, and stealing bases, and then it figures out how well the player did that, considering his age, what league he played in, and how his park impacted him.

Rizzotti put up a .263/.351/.454 line in the FSL. The average batting line in the FSL was .252/.322/.363. That’s all players, including the legit prospects and the career minor leaguers who have no business being in the FSL. His 11.9% BB rate was impressive, and his ISO was close to .200. When thinking about how he ranks among all 1B prospects, its obviously not near the top. He doesn’t have amazing power, doesn’t hit for a really high average, and won’t draw an obscene amount of walks. So basically he won’t ever be a star. But at the same time, he does have one above average core skill (the walks) and his other secondary skill (power) isn’t terrible. His score of 18.90 puts him in the “average” tier, and really, he’s an average hitter. Because of the demands of 1B offensively, hes likely not going to make it here. Nothing about his score or his numbers indicate star, but they also indicate he’s a decent hitter.

LikeLike

who is e wasserman who pitched today , never heard of him?

LikeLike

Wasserman has kicked around the White Sox organization for several seasons, coming over as a minor league free agent. He performed poorly in his MLB outings but his minor league numbers are very good. He’s a sidearm pitcher who generates groundballs.

LikeLike

ty alan for the info

LikeLike

The fact that 1st basemen need some kind of position adjustment has already been covered. The other position that is seemingly over rated is Relief pitcher. Relievers numbers are generally higher than starters’ numbers. There are a number of relievers ranked higher than any of the Starters. Someting is wrong when a guy like DeFratus is ranked in that high of a prospect tier. Scouts say his stuff plays up as a reliever and he will not be as effective as a Starter.

LikeLike

I have seen a solid sober man driven perfectly mad for the moment by two associated with so-called rum, supplied

to him at one of these shanties. His fee was 110 guineas, knowning that

from the hotelkeeper was 30 guineas. As the atmosphere begun

to disintegrate around me, I felt a fresh strength forge into

my thoughts.

LikeLike